Organismal biology and ecology have primarily been defined by what scientists could observe from the outside. Researchers mapped migration routes, measured metabolic rates, and sequenced genomes with clinical precision. Yet, the field remained locked out of the internal lives of its subjects. Animal behavior was often treated as reactive instinct rather than intentional choice. That wall is now crumbling. Decoding animal communication with AI is shifting the field’s perspective on animal behavior research.

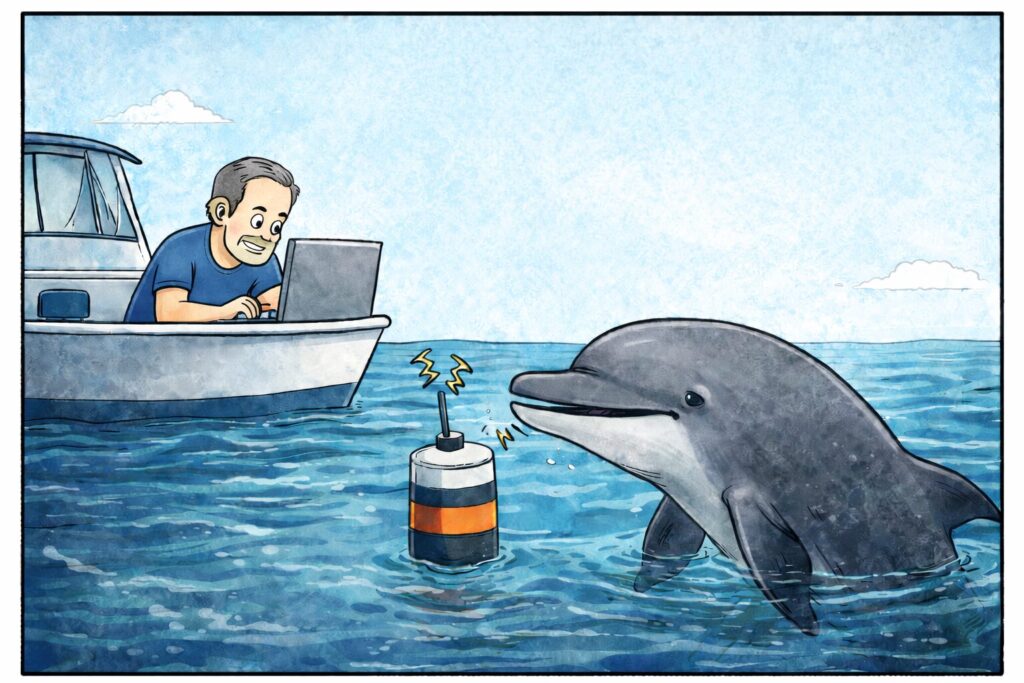

In practical terms, decoding animal communication with AI means using large models to find repeated acoustic units and patterns in animal sounds. Then researchers link those patterns to context, including behavior, ecology, and social setting. It does not produce human language translations. Instead, it produces structured, testable hypotheses about what signals predict, and when they matter. This fundamentally changes the scientific question from “what did it do?” to “what did it mean?”

Decoding Animal Communication with AI: A New Perspective in Ecology

In the past, ecology often treated animal sounds as simple, hard wired “signals.” Researchers categorized them as fixed biological responses to hunger, fear, or mating. However, the field missed deep nuances that only high compute processing could reveal. The shift began in late 2024 and accelerated through 2025. Now, in early 2026, researchers are finding that nature is not silent. Instead, it is hyper vocal and structurally complex.

The field is moving from passive recording to active decoding. This revolution is powered by decoding animal communication with AI. This approach allows researchers to process millions of hours of sound quickly. Consequently, scientists are no longer looking for simple patterns. Instead, they are looking for stable structure in communication systems. Recent milestones suggest that some animals use sound units with phonetic-like organization. This has changed how many researchers interpret nonhuman behavior.

This shift also demands a clear standard of evidence. Claims about meaning hold up best when structure repeats reliably, signals shift with context, and models generalize across groups and years. Strong results also link sound to observable outcomes, such as coordination, movement, or conflict. Even better, playback or other interventions trigger consistent responses. Decoding animal communication with AI can support each of these tests, but it cannot replace them.

The Engine Powering the Decoding of Animal Communication With AI

The tools used today are a far cry from the basic spectrum analyzers of previous years. The basis of recent progress lies in foundation models. In 2025, the release of NatureLM audio changed many workflows for researchers. This model does not need human labels to learn useful structure in sound. Instead, it uses self-supervised learning to find its own internal logic.

In practice, these models convert audio into compact numerical representations. Those representations cluster into candidate units, and those units form higher order patterns. Next, researchers align those patterns with video, movement, habitat, or social context. Then they score performance by prediction, error rates, and out of sample tests. That pipeline is the operational core of decoding animal communication with AI.

Furthermore, these models analyze pitch, rhythm, and timbre simultaneously. They can identify individual animals within a massive chorus with surgical precision. This capability is the heart of this work at scale. By linking sound to drone captured video, researchers gain essential behavioral context. As a result, researchers can see what a chimpanzee is doing while it vocalizes. This multimodal integration is becoming a 2026 gold standard. It supports a fuller view of nonhuman life than earlier methods allowed.

Using AI to Make Sense of Whale Communication

A widely discussed breakthrough involves the sperm whale. For years, researchers knew they communicated via codas, or rhythmic clicks. Project CETI has reported patterns that the team describes as an “alphabet like” system. Decoding animal communication with AI, the group was able to predict aspects of whale behavior with reported accuracy near 80%.

Here is what that claim is trying to capture. Researchers first collect long, high quality recordings from identified whales in known social contexts. They then align codas with observable events, such as group reunions, coordinated dives, or changes in spacing. Decoding animal communication with AI allows them to test whether certain coda patterns reliably precede those outcomes.

The “alphabet like” idea does not mean letters with fixed dictionary meanings. Instead, it points to a set of recurring coda types that combine timing, rhythm, and sequence structure. Models treat these patterns like units that can be classified and recombined at scale. In that framing, “80% accuracy” often means the system predicts a context label better than chance from recent codas.

Another striking claim involves vowel like structure. Research groups, including teams at UC Berkeley, report phonetic properties in click sequences. Therefore, these clicks may function like speech in limited, specific ways. These sounds may be conveying socially relevant information tied to identity, coordination, or location. Some teams also report boundary like clicks that may structure interaction.

On the technical side, the vowel like claim is usually about stable spectral patterns that recur across many codas. The argument is that these patterns behave like a constrained set of acoustic “shapes,” not random variation. Supportive analyses try to rule out confounds such as whale orientation, depth, or recording geometry. Even so, decoding animal communication with AI still needs stronger tests, especially interventions and replication.

Decoding Animal Communicatoin with AI in Other Species

The success of decoding animal communication with AI is not limited to the sea. In forests and other land habitats, researchers are seeing parallel progress. The basic idea is the same. Record a lot of sound, link it to what animals are doing, and then ask whether patterns in the calls predict real outcomes.

Recent 2026 findings on chimpanzee call combinations are drawing attention because they move beyond single calls. Studies report that specific combinations predict collective actions more reliably than any one sound alone. For example, a hoot followed by a pant can predict something different than a hoot by itself. The order matters, and the pairing seems to change what the group does next. This is still a long way from human language. However, it suggests a simple kind of rule based structure, where call units can be combined to change meaning in a consistent way.

Decoding animal communication with AI helps here because it can search for these combinations at scale. Instead of relying on a small set of hand labeled examples, models can scan thousands of hours of audio and flag recurring sequences. Then researchers can test those sequences against visible outcomes, such as group movement, coordinated displays, or changes in social spacing. The key point is that the method turns a fuzzy impression into a measurable prediction that can be checked and replicated.

Furthermore, the Earth Species Project has deployed mini biologgers on wild crows. These devices are small enough to collect close range vocalizations that microphones in the environment often miss. They have captured large numbers of calls across many daily interactions. AI is now mapping those sounds onto social context, including who is nearby, what just happened, and how the group is organized.

Because of decoding animal communication with AI, researchers are testing whether crows track relationships across time. They do this by asking whether the pattern of sounds changes depending on who the crow is dealing with. Some analyses suggest that crows behave as if they remember past interactions and adjust future alliances. That kind of inference remains hard, because memory is not directly observable. Still, the resolution is improving as datasets grow and models get better at separating signal from noise.

Limits and Shortcomings to Decoding Animal Communication with AI

Even strong models face a ground truth problem. Researchers cannot directly observe “meaning” in the way they can measure body temperature or gene expression. Instead, meaning has to be inferred from context and outcomes. That creates a risk of circular reasoning, where a model seems to find meaning only because the labels already reflect human assumptions about what a scene implies. Context can also mislead for simpler reasons. A call might correlate with hunting, conflict, or reunion, but correlation does not guarantee that the call carries that information.

Recording conditions also bias results. Microphones differ in frequency response and noise handling. Distance changes what gets captured, especially for faint calls. Habitat acoustics matter, because forests, open water, and rocky shorelines filter sound in different ways. Season and weather also change vocal behavior and audibility. If datasets are uneven across these factors, decoding animal communication with AI may learn recording artifacts rather than communication structure.

Models can also learn shortcuts. They may recognize individual identity, location, or a specific background sound and use that as a proxy for context. In that case, the system looks accurate while missing the communication content entirely. This is why careful evaluation matters, including out of sample tests across different groups, different years, and different recording setups. Replication across sites and years is especially important for headline claims.

Metaphors can also distort interpretation. Terms like alphabet, vowels, and punctuation can help readers picture patterns, but they are not equivalences to human language. They often describe statistical regularities, not grammar with shared definitions. Decoding animal communication with AI works best when it stays tethered to measurable predictions, such as whether a call sequence reliably precedes an observable action or changes another animal’s behavior in playback tests. I find that restraint increases impact, because it keeps the science ahead of the story and makes the strongest results harder to dismiss.

Ethical Frontiers of Decoding Animal Communication with AI

With power comes responsibility. The ability to decode language like structure raises ethical questions for the scientific community. The first issue is interpretation. If decoding animal communication with AI assigns structure to calls, researchers may be tempted to treat those patterns as settled meaning. That can inflate claims, but it can also shape policy and public attitudes.

In late 2025, a movement began to grant legal personhood to whales. This is based on their demonstrated agency and complex communication. If they can share information, do they have rights? The argument has coherence, but governance still looks underbuilt. Even if personhood is not the outcome, the underlying question remains. What obligations follow once researchers can plausibly show that animals exchange rich, socially relevant information?

There is also the issue of talking back. Using information gained from decoding animal communication with AI to mimic calls could disrupt behavior at scale, especially if playback becomes common or automated. Even well-intentioned interventions could alter mating, parenting, migration, or conflict dynamics. The ethical burden grows when the animals are endangered, when the habitat is stressed, or when the model is not well validated.

In addition, data from decoding animal communication with AI can be a powerful tool for conservation, but it helps to separate two uses. One is species surveillance and behavioral indicators. In that mode, researchers use vocal signatures to detect rare species, estimate presence and abundance, map habitat use, and flag stress or disturbance when call patterns shift. Because the recording can run continuously, it can reveal decline or disruption earlier than visual surveys.

The other is acoustic monitoring, where the same microphones and models detect events in an ecosystem. In that monitoring mode, researchers can detect illegal logging in real time by listening for sound signatures. Systems listen for chainsaws or gunshots in protected areas. Those sounds are not animal communication, but they matter because they threaten the animals being studied and conserved. Moreover, researchers monitor ecosystem health through acoustic diversity. A healthy forest is often a loud forest.

There is also a dual use concern. The same systems that locate rare species can help poachers or traffickers. In practice, the risk is not only the model, but the data layer. Location tagged recordings and real time detection can become a targeting tool. Data ownership also matters, especially when recordings come from Indigenous lands or protected habitats. The field needs norms for consent, access, model release, and safeguards. Decoding animal communication with AI will move faster than policy unless researchers build friction in early.

Conclusion: A New Era in Decoding Animal Communication with AI

As 2026 unfolds, the horizon is bright. Researchers are not building a simple “Google Translate for Dogs.” Instead, they are building tools to test communication hypotheses across species. Decoding animal communication with AI is creating a rudimentary dictionary for the planet, but it will be provisional and revisable. It reconnects humans with the natural world through evidence rather than projection.

Researchers must continue to push these boundaries with discipline. Every recording collected is a piece of a larger biological puzzle. The field is moving past the era of casual human certainty. Instead, scientists are beginning to listen with better instruments and sharper tests. By the end of this year, researchers may understand signals that were previously invisible to biology. The conversation has begun. Are you ready to listen?

Be sure to visit bleedingedgebiology.com next week for another “bleeding edge” topic!

Your thoughts

Decoding animal communication with AI is a fast moving field, and the interpretation is where it gets interesting. What do you think counts as convincing evidence of meaning rather than pattern matching? Where do you draw the line between a useful metaphor like “alphabet” and an overreach that confuses readers?

I am also curious which applications feel most valuable to you. Is your interest mainly in conservation and monitoring, or in the deeper question of communication and social life?

Finally, what ethical guardrails would you want in place before researchers start “talking back” with synthetic calls? If you have a favorite project, paper, or dataset in this space, share it in the comments.

Bleeding Edge Biology recommends

If readers want to go deeper into the subject of decoding animal communication with AI, the resources below are the fastest route to the real work. I chose items that show the ideas, the datasets, and the evaluation mindset.

Talks and short videos that set the frame

Could an orca give a TED Talk? | Karen Bakker | TED

Bakker gives a crisp overview of why “translation” is hard, and what the near term wins look like. She also connects decoding animal intelligence with AI to ethics and governance.

Can we learn to talk to sperm whales? | David Gruber | TED

This is the most accessible introduction to Project CETI’s strategy and ambitions. It frames decoding animal communication with AI as a data problem before it becomes a “meaning” problem.

How AI is decoding the language of sperm whales | Pratyusha Sharma | TED

A direct window into the “phonetic alphabet” framing and the modeling logic behind it. It pairs well with the Nature Communications paper listed below.

Exploring the mysterious alphabet of sperm whales | MIT News

MIT’s explainer is unusually concrete about the dataset scale and the kinds of structure they claim to detect. It is a good bridge from narrative to primary literature.

Core projects and hands-on starting points

Project CETI

Start here if readers want the flagship “whale side” of decoding animal intelligence with AI. The site links to papers, field methods, and research updates.

Earth Species Project

ESP is the best “general platform” view of decoding animal communication with AI. Their blog often explains methods and failure modes in plain language.

NatureLM-audio demo

This is a practical way to see what “foundation model for bioacoustics” means. It makes the interaction loop tangible, even for non-coders.

NatureLM-audio code and model releases

Useful for readers who want to inspect the training setup, benchmarks, and intended use cases. This is where decoding animal communication with AI becomes reproducible work.

BirdNET

BirdNET is not “translation,” but it shows the scaling logic that made decoding animal communication with AI possible. It is also a strong example of real world deployment.

Reporting and longer reads that add context

Unlocking Avian Secrets: Tiny biologgers and carrion crows | Earth Species Project

A concrete case where better sensors plus ML uncover low amplitude social calls. It shows how decoding animal communication with AI depends on close range, contextual data.

UC Berkeley and Project CETI: vowel-like structure in codas

A readable summary of the “very slow vowels” claim, with a link to the open access paper. It is a good example of how framing can outrun evidence.

The Sounds of Life | Karen Bakker (book)

This book is a wide angle introduction to bioacoustics, sensors, and the promise and risks of decoding animal communication with AI. It also helps keep the hype in check.

Primary literature

Contextual and combinatorial structure in sperm whale vocalisations | Nature Communications (2024)

This is the “phonetic alphabet” foundation paper behind Project CETI’s 2024 announcements. It formalizes rhythm, tempo, rubato, and ornamentation as structured features.

Capturing vocal communication in a free-living corvid | Animal Cognition (2025)

This is the crow biologger study behind the “full repertoire” story. It highlights how device design enables better ground truth for decoding animal communication with AI.

Versatile use of chimpanzee call combinations promotes communication efficiency | Science Advances (2025)

This expands the combinatorics story with more context dependence. It is a good example of how careful inference should look.

NatureLM-audio: an Audio-Language Foundation Model for Bioacoustics | arXiv (2024)

This is the technical backbone for the “foundation model” claims. It is directly relevant to decoding animal communication with AI as an ML paradigm.

BirdNET: A deep learning solution for avian diversity monitoring | Ecological Informatics (2021)

This is a widely cited baseline for large scale animal sound classification. It helps readers separate mature monitoring from harder “meaning” claims.

Using machine learning to decode animal communication | Science (2023)

A high level perspective piece that maps the field and its pitfalls.